Novartis impulsa una jornada para poner rumbo hacia la medicina del futuro

Aboga por trabajar de manera conjunta para prolongar vidas.

Industria

La CE autoriza a Lilly la comercialización de su pluma KwikPen precargada con tirzepatida

Se empleará para controlar el peso en sus indicaciones de obesidad y diabetes tipo 2.

Política sanitaria

La Unión Europea pone en marcha la Alianza de Medicamentos Críticos

Esta iniciativa comienza un diálogo de todos los implicados y sienta bases para implementar acciones coordinadas.

Industria

Aeseg visita la Planta de Clasificación de Sigre para promover la conciencia ambiental entre sus asociados

Refuerza el compromiso ambiental de la industria farmacéutica de medicamentos genéricos.

Nace la Fundación Vivir Dos Veces para ayudar a personas con daño cerebral adquirido

Nombramientos

Lo + leido

- 1

El papel de las enfermeras en el uso de los medicamentos biosimilares

- 2

5 Beneficios que aporta el treonato de magnesio a nuestro organismo

- 3

AseBio empareja de nuevo a mujeres profesionales y estudiantes del sector biotecnológico español

- 4

EIT Health financia con 1,5 millones de euros el proyecto Assist de Idoven para el diagnóstico temprano del infarto

- 5

El Consejo General de Farmacéuticos y GEPAC firman un convenio para reforzar desde la farmacia la atención al paciente con cáncer

Lo + visto

Economía

Política sanitaria

Tecnología

Fujifilm Healthcare presenta un nuevo mamógrafo digital en el Congreso Europeo de Radiología

06/03/2024

Industria

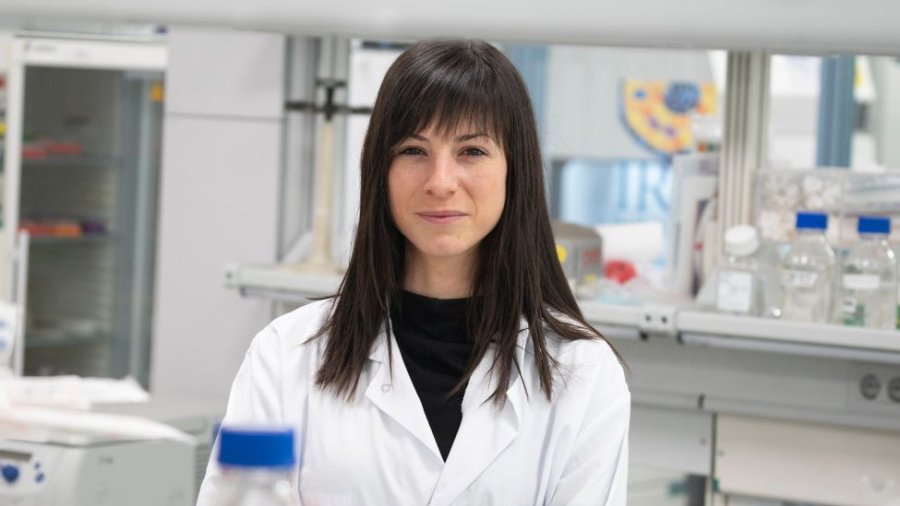

Cristina Mayor-Ruiz gana el Premio 60 Aniversario Farmaindustria Jóvenes Investigadores

16/04/2024

Farmacia

Hospitales

El Hospital Universitario del Henares organiza un nuevo encuentro del Consejo de Pacientes

19/03/2024

La Asociación Española de Cirujanos convoca sus becas para proyectos de investigación

12/03/2024

Legislación

Opinión

Aumentan los casos multirresistentes de tuberculosis, el reto es mejorar el diagnóstico en la población inmigrante

24/03/2024

I+D

Entrevistas

RSC

Tres de cada cuatro personas con hemofilia en España creen que llevan una vida saludable

17/04/2024